Duncan WattsSHIA LEVITT

Harry Potter author J. K. Rowling probably didn’t mean to conduct a sociology experiment when she published her latest book, a crime novel about a one-legged detective investigating a supermodel’s suicide. But as Duncan Watts, PhD ’97, wrote in an essay for Bloomberg.com in July, that’s pretty much what she did.

Rowling published the book, The Cuckoo’s Calling, under a pseudonym; while it got strong reviews, it sold fewer than 1,500 copies. Then its true authorship came to light—and it rocketed to the top of the bestseller lists.

For Watts, a sociologist and network theorist at Microsoft Research who was recently tapped as an A. D. White Professor-at-Large, the case echoed an online experiment he’d done, dubbed Music Lab, in which 30,000 people were asked to listen to, rate, and download songs by unfamiliar bands. Some of the participants—who were randomly separated into “worlds,” or iterations of the experiment—could see how often others had downloaded the songs; the rest didn’t know. The researchers found that when people could see what others liked, “inequality of success” increased; popular songs got more downloads, and unpopular songs got fewer. “Second and more surprisingly, each song’s popularity was incredibly unpredictable,” Watts writes. “One song, for example, came in first out of forty-eight we sampled in one ‘world,’ but it came in fortieth in another.” So what made success more likely? The more or less random situation of having a few early downloads, which set a song on the path to popularity—a phenomenon Watts calls “cumulative advantage.”

Watts’s point, as it pertains to Rowling, is that quality is hardly a predictor of success—evinced by the fact that a critically well-received work by the writer of the most successful book series of all time went nowhere until it latched onto the coattails of a boy wizard. There’s no intrinsic reason, he argues, that The Cuckoo’s Calling became a bestseller—or, for that matter, why the first Harry Potter launched a global juggernaut of movies and theme parks, when equally worthy works of young adult fiction do not. But Watts admits that his view is hard for many people to swallow. “People just cannot accept that you got struck by lightning,” he says. “They think there has to be a reason. And there is a reason—it’s called cumulative advantage—but we don’t like that. We like the ‘it’s special’ reason. You try to tell Harry Potter fans that it’s just an ordinary book that, in a different version of reality, they wouldn’t have bothered to pay attention to, and they’ll think you’re crazy.”

The Harry Potter phenomenon is one of many Watts explores in his most recent book, Everything Is Obvious, Once You Know the Answer. Subtitled How Common Sense Fails Us, the book examines the fallacy of conventional wisdom in explaining complex systems—why, say, the notions of economy that we apply to our own households don’t translate to international financial markets. If one person can balance a household budget or settle a dispute between neighbors, why can’t Congress fix the deficit, or the right leader bring peace to the Middle East? “We attribute collective change to individuals,” Watts observes. “This is why we compensate CEOs the way we do; why we lionize Steve Jobs; why we have the ‘great man’ view of history; why we think the President actually has an effect on the economy. We’re always overestimating the importance of individuals in driving social change.”

The Harry Potter phenomenon is one of many Watts explores in his most recent book, Everything Is Obvious, Once You Know the Answer. Subtitled How Common Sense Fails Us, the book examines the fallacy of conventional wisdom in explaining complex systems—why, say, the notions of economy that we apply to our own households don’t translate to international financial markets. If one person can balance a household budget or settle a dispute between neighbors, why can’t Congress fix the deficit, or the right leader bring peace to the Middle East? “We attribute collective change to individuals,” Watts observes. “This is why we compensate CEOs the way we do; why we lionize Steve Jobs; why we have the ‘great man’ view of history; why we think the President actually has an effect on the economy. We’re always overestimating the importance of individuals in driving social change.”

Such a message isn’t necessarily popular or easily understood, notes Watts’s former graduate adviser, math professor Steve Strogatz. “I think it’s hard for people to appreciate the book,” he says. “When I look at the reviews on Amazon, a lot of readers want a simple, facile message. It’s difficult to get a handle on what he’s trying to say. He’s not saying that the great individual carries the day, but he doesn’t deny that great individuals exist. So what is the point? As far as I can see, it’s that it’s very hard to make these things scientific. It would be much more commercial, and perhaps better for him professionally, if he came up with a simpler message. But he’s not going to do that, because he’s honest. So he gives you, as clearly as he can, his very complicated message. And that takes integrity, because it’s a hard sell.”

While the book may not offer user-friendly tropes in the vein of Malcolm Gladwell, to some in academia and beyond, Watts’s approach offers an intriguing and potentially powerful way to approach complex problems—particularly in an era when the Internet can facilitate virtual experiments on a massive scale. Cornell sociologist Michael Macy notes that Watts’s nomination as an A. D. White Professor was supported by a remarkably diverse group of faculty—not just in sociology, but in economics, human development, management, communication, physics, math, ILR, and computer science. “Duncan continues to have a transformative influence on the social and behavioral sciences,” Macy says. “He’s not only a gifted writer but a charismatic speaker, with an uncanny ability to make even highly technical topics come to life for broad audiences of non-specialists.”

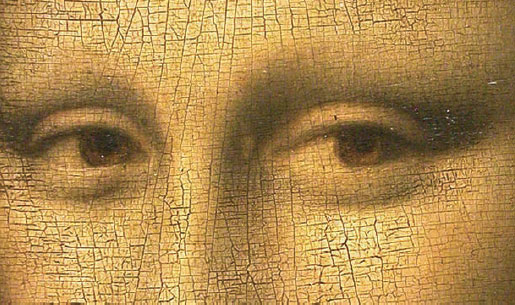

A central part of Watts’s argument is that hindsight isn’t 20/20; it’s reductive and unreliable. In a section on the Mona Lisa, for example (see excerpt), he discusses how the painting languished in relative obscurity for centuries, only becoming world famous after it was stolen from the Louvre in the early 1900s—but since the idea of its greatness owing to a fluke is so inherently unsatisfying, people ascribe post-facto “common sense” explanations. (It’s the smile! It’s the fantastical background! It’s the genius of Leonardo da Vinci!) “Common sense is the mythology—the religion—of the social world,” Watts says. “It’s the simple answer that maps directly onto our experience, the explanation we need to make things make sense. So we hear thunder and say, ‘The gods are fighting.’ That’s something we understand; people get angry and throw things. Common sense is socially adaptive. If we constantly had to grapple with the complexity of the world, we wouldn’t be able to get out of bed in the morning.”

In the preface to Everything Is Obvious, Watts cites a sixty-year-old paper in which sociologist Paul Lazarsfeld described the findings of a study of servicemen during and after World War II—for example, the fact that men from rural backgrounds had better morale than their urban counterparts. That makes perfect sense, Watts notes; after all, rural men in the Forties were accustomed to harsher living and more physical labor, so it follows that military life would seem more pleasant. But then Lazarsfeld pulls a switcheroo: it was actually city natives who were happier in the Army. “Of course, had the reader been told the real answers in the first place she could just as easily have reconciled them with other things that she already thought she knew,” Watts writes. “‘City men are more used to working in crowded conditions and in corporations, with chains of command, strict standards of clothing and social etiquette, and so on. That’s obvious!'”

The point, Watts writes, is that if an answer and its opposite can seem equally obvious through the right mental gymnastics, there’s something wrong with the idea of “obviousness” in the first place. “We make this mistake so often, and it really hurts us,” Watts says. “We can’t understand the social world just by telling a bunch of cute stories. You need theories, experiments, data. It’s tricky and counterintuitive, and everything is more complicated than you think it is. Your intuition is always misleading you into thinking you understand things that you don’t.”

Watts didn’t start off in sociology. Growing up in a small town in southeast Queensland, Australia, he thought he wanted to be a physicist. Then, more or less out of the blue, he decided to join the Navy, attending college at Australia’s national military academy. After graduation, he owed five years of service. “I really wanted to go to sea, but I had bad eyesight and they wouldn’t let me,” Watts recalls with a rueful laugh, “so I ended up in all these jobs I didn’t like.” Watts wound up in his nation’s version of the Pentagon, doing work that eventually sparked an interest in sociology and—though he didn’t have a name for it then—network science. “One thing you learn in the Navy is that you never want to use the chain of command, even though you’re always supposed to, because it breaks; everything goes up and gets jammed,” he says. “So instead you go horizontally. You find the person over in Supply Squadron who was a classmate of a friend of yours, and get them to help you get whatever you need. Whereas, if you go through your commander’s commander’s commander, nothing will ever happen.”

The lesson, he says, is that there’s an important distinction between how we think the world works and how it actually functions. While we’re comfortable with the idea that a few trendsetters dictate fashion, for example—or that a Patient Zero is responsible for the spread of a deadly disease—the truth is far messier and more complicated. “We bring to bear common sense that seems right to us,” he says. “It seems right that the world is hierarchical. If we try to understand how information flows, we say there’s got to be someone special doing it, otherwise we don’t know how to understand it. We don’t have a way of thinking of decentralized systems. When people tried to understand how the brain works, they thought there must be a little guy in there making all the decisions.”

‘I’ve spent my whole life doing what other people aren’t doing. I pretty much always gravitate to the crack between the stools.’After a couple of years in the Navy, Watts applied to graduate school, winning a Fulbright to study theoretical and applied mechanics at Cornell. (He was eventually able to avoid returning to military service by reimbursing the government for his training costs.) On the Hill, he worked under Strogatz on a project that started with how networks of tree crickets synchronize their chirps, and wound up as a career maker. In work that essentially served as a roadmap for modern network science, Watts and Strogatz cracked what’s known as the “small world” or “six degrees” problem—why, even in a system as large as the population of the Earth, a relative handful of connections unites us all. In the paper—”Collective Dynamics of ‘Small-World’ Networks,” published in Nature in June 1998—the researchers demonstrated the “six degrees” phenomenon in three disparate systems: the power grid of the western U.S., the neural network of the worm C. elegans, and collaborations among actors on the Internet Movie Database.

The paper caused a sensation, prompting stories in the national media—a popular parlor game at the time was “Six Degrees of Kevin Bacon”—and helping Watts get his first book, Small Worlds: The Dynamics of Networks Between Order and Randomness, published by Princeton University Press. It remains one of the most cited papers in the field, with more than 20,000 citations on Google Scholar. But Watts notes that it almost didn’t get published, initially being rejected by Nature because the reviewers didn’t know what to make of it; one of them dismissed it in two highly critical sentences. “There was no such thing as network science,” Watts notes. “I didn’t even know what to call what I was doing. It wasn’t sociology. It wasn’t graph theory. There were no jobs and no funding. Before Steve would let me work on the problem, he made me promise that I wasn’t interested in having an academic career.”

CENTRE POMPIDOU

But he did have one; he went on to become a full professor in sociology at Columbia, eventually leaving because, as he puts it, “all my time was sucked up by everything except research.” Jettisoning a tenured professorship at an Ivy League school may sound like career suicide—but it’s typical Watts. “I’ve spent my whole life doing what other people aren’t doing,” he says. “I pretty much always gravitate to the crack between the stools.” He spent five years at Yahoo Research before moving to Microsoft about a year ago. “I’m still interested in the same problems as when I was working with Steve,” Watts muses. “Collective dynamics of social networks—whether it’s risk in financial systems, epidemics of disease, outbreaks of political violence, or changing social norms. These are the biggest issues of our time and they affect everyone, but we have very little idea of why they happen. It’s endlessly shocking to me how unscientific we are about how we go about solving these big social and economic problems—that we leave it to our instincts to make these weighty and consequential decisions. So that’s the mission; that’s what gets me excited.”

Watts is well aware of the fact that he is, in many ways, a poster child for cumulative advantage—how flukes can engender long-term success. He went to grad school because the Navy wouldn’t let him go to sea. The Nature paper got a second look because that one review was so peremptory, the editor sent it out again. The paper gave him the cachet to get Small Worlds published; that book’s editor was instrumental in getting his next one, Six Degrees: The Science of a Connected Age, published by another house. Six Degrees helped him get tenure at Columbia—and so on. “People often think it’s depressing to say that things are random, they’re unpredictable, and that undercuts their meaning,” Watts muses. “They think if the Mona Lisa is just an accident, they’ll never be able to look at it the same way again. But meaning is different from explanation. It’s true that if we re-ran history, some other painting would be famous and not the Mona Lisa. It’s probably true for your life, your relationships; how I met my girlfriend was a total accident that could easily not have happened. Almost anything of importance—meeting Strogatz—was a random fluke. But meaning is a different thing. To say that something is random is not to say that it’s not meaningful. Meaning is a construct that we place on the event once we know it’s important.”

Group Think

An excerpt from Everything Is Obvious explores the ‘wisdom and madness of crowds’

By Duncan Watts

In 1519, shortly before he died, the Italian artist, scientist, and inventor Leonardo da Vinci put the finishing touches on a portrait of a young Florentine woman, Lisa Gherardini del Giocondo, whose husband, a wealthy silk merchant, had commissioned the painting sixteen years earlier to celebrate the birth of their son. By the time he finished it, Leonardo had moved to France at the invitation of King François I, who eventually purchased the painting; thus apparently neither Ms. del Giocondo nor her husband ever got the chance to view Leonardo’s handiwork. Which is a pity really, because five hundred years later that painting has made her face about the most famous face in all of history.

THE LOUVRE MUSEUM

The painting, of course, is the Mona Lisa, and for those who have lived their entire lives in a cave, it now hangs in a bulletproof, climate-controlled case on a wall all by itself in the Musée du Louvre in Paris. Louvre officials estimate that nearly 80 percent of their six million visitors each year come primarily to see it. Its current insurance value is estimated at nearly $700 million—far in excess of any painting ever sold—but it is unclear that any price could be meaningfully assigned to it. The Mona Lisa, it seems fair to say, is more than just a painting—it is a touchstone of Western culture. It has been copied, parodied, praised, mocked, co-opted, analyzed, and speculated upon more than any other work of art. Its origins, for centuries shrouded in mystery, have captivated scholars, and its name has leant itself to operas, movies, songs, people, ships—even a crater on Venus.

Knowing all this, a naïve visitor to the Louvre might be forgiven for experiencing a sense of, well, disappointment upon first laying eyes on the most famous painting in the world. To start with, it is surprisingly small. And being enclosed in that bulletproof box, and invariably surrounded by mobs of picture-snapping tourists, it is irritatingly difficult to see. So when you do finally get up close, you’re really expecting something special—what the art critic Kenneth Clark called “the supreme example of perfection,” which causes viewers to “forget all our misgivings in admiration of perfect mastery.” Well, as they say, I’m no art critic. But when, on my first visit to the Louvre several years ago, I finally got my chance to bask in the glow of perfect mastery, I couldn’t help wondering about the three other da Vinci paintings I had just walked by in the previous chamber, and to which nobody seemed to be paying the slightest attention. As far as I could tell, the Mona Lisa looked like an amazing accomplishment of artistic talent, but no more so than those other three. In fact, if I hadn’t already known which painting was the famous one, I doubt that I could have picked it out of a lineup. For that matter, if you had put it in with any number of the other great works of art on display at the Louvre, I’m quite positive it wouldn’t have jumped out at me as the obvious contender for most-famous-painting award.

Now, Kenneth Clark might well reply that that’s why he’s the art critic and I’m not—that there are attributes of mastery that are evident only to the trained eye, and that neophytes like me would do better simply to accept what we’re told. OK, fair enough. But if that’s true, you would expect that the same perfection that is obvious to Clark would have been obvious to other art experts throughout history. And yet, as the historian Donald Sassoon relates in his illuminating biography of the Mona Lisa, nothing could be further from the case. For centuries, the Mona Lisa was a relatively obscure painting languishing in the private residences of kings—still a masterpiece, to be sure, but only one among many. Even when it was moved to the Louvre, after the French Revolution, it did not attract as much attention as the works of other artists, like Esteban Murillo, Antonio da Correggio, Paolo Veronese, Jean-Baptiste Greuze, and Pierre Paul Prud’hon, names that for the most part are virtually unheard of today outside of art history classes. And admired as he was, up until the 1850s, da Vinci was considered no match for the true greats of painting, like Titian and Rafael, some of whose works were worth almost ten times as much as the Mona Lisa. In fact, it wasn’t until the twentieth century that the Mona Lisa began its meteoric rise to global brand name. And even then it wasn’t the result of art critics suddenly appreciating the genius that had sat among them for so long, nor was it due to the efforts of museum curators, socialites, wealthy patrons, politicians, or kings. Rather, it all began with a burglary.

On August 21, 1911, a disgruntled Louvre employee named Vincenzo Peruggia hid in a broom closet until closing time and then walked out of the museum with the Mona Lisa tucked under his coat. A proud Italian, Peruggia apparently believed that the Mona Lisa ought rightly to be displayed in Italy, not France, and he was determined to repatriate the long-lost treasure personally. Like many art thieves, however, Peruggia discovered that it was much easier to steal a famous work of art than to dispose of it. After hiding it in his apartment for two years, he was arrested while attempting to sell it to the Uffizi Gallery in Florence. But although he failed in his mission, Peruggia succeeded in catapulting the Mona Lisa into a new category of fame. The French public was captivated by the bold theft and electrified by the painting’s unexpected recovery. The Italians, too, were thrilled by the patriotism of their countryman, and treated Peruggia more like a hero than a criminal—before the Mona Lisa was returned to its French owner, it was shown all over Italy.

From that point on, the Mona Lisa never looked back. The painting was to be the object of criminal activity twice more—first, when a vandal threw acid on it, and then when a young Bolivian, Ugo Ungaza Villegas, threw a rock at it. But primarily it became a reference point for other artists—most famously in 1919, when the Dadaist Marcel Duchamp parodied the painting and poked fun at its creator by adorning a commercial reproduction with a mustache, a goatee, and an obscene inscription. Salvador Dalí and Andy Warhol followed suit with their own interpretations, and so did many others—in all, it has been copied hundreds of times and incorporated into thousands of advertisements. As Sassoon points out, all these different people—thieves, vandals, artists, and advertisers, not to mention musicians, moviemakers, and even NASA (remember the crater on Venus?)—were using the Mona Lisa for their own purposes: to make a point, to increase their own fame, or simply to use a label they felt would convey meaning to other people. But every time they used the Mona Lisa, it used them back, insinuating itself deeper into the fabric of Western culture and the awareness of billions of people. It is impossible now to imagine the history of Western art without the Mona Lisa, and in that sense it truly is the greatest of paintings. But it is also impossible to attribute its unique status to anything about the painting itself.

This last point presents a problem because when we try to explain the success of the Mona Lisa, it is precisely its attributes on which we focus our attention. If you’re Kenneth Clark, you don’t need to know anything about the circumstances of the Mona Lisa’s rise to fame to know why it happened—everything you need to know is right there in front of you. To oversimplify only slightly, the Mona Lisa is the most famous painting in the world because it is the best, and although it might have taken us a while to figure this out, it was inevitable that we would. And that’s why so many people are puzzled when they first actually set eyes on the Mona Lisa. They’re expecting these intrinsic qualities to be apparent, and they’re not. Of course, most of us, when faced with this moment of dissonance, simply shrug our shoulders and assume that somebody wiser than us has seen things we can’t see. And yet as Sassoon deftly but relentlessly lays out, whatever attributes the experts cite as evidence—the novel painting technique that Leonardo employed to produce so gauzy a finish, the mysterious subject, her enigmatic smile, even da Vinci’s own fame—one can always find numerous other works of art that would seem as good, or even better.

Of course, one can always get around this problem by pointing out that it’s not any one attribute of the Mona Lisa that makes it so special, but rather the combination of all its attributes—the smile, and the use of light, and the fantastical background, and so on. There’s actually no way to beat this argument, because the Mona Lisa is of course a unique object. No matter how many similar portraits or paintings some pesky skeptic drags out of the dustbin of history, one can always find some difference between them and the one that we all know is the deserving winner. Unfortunately, however, this argument wins only at the cost of eviscerating itself. It sounds as if we’re assessing the quality of a work of art in terms of its attributes, but in fact we’re doing the opposite—deciding first which painting is the best, and only then inferring from its attributes the metrics of quality. Subsequently, we can invoke these metrics to justify the known outcome in a way that seems rational and objective. But the result is circular reasoning. We claim to be saying that the Mona Lisa is the most famous painting in the world because it has attributes X, Y, and Z. But really what we’re saying is that the Mona Lisa is famous because it’s more like the Mona Lisa than anything else.

Reprinted from the book Everything Is Obvious: Once You Know the Answer by Duncan J. Watts. Copyright © 2011 by Duncan Watts. Published by Crown Business, an imprint of the Crown Publishing Group, a division of Random House LLC, a Penguin Random House Company. Used by permission.